Hugo T.M. Kussaba, Abdalla Swikir, Fan Wu, Anastasija Demerdjieva, Gitta Kutyniok, and Sami Haddadin

IFAC-PapersOnLine

Abstract

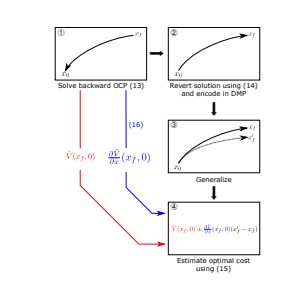

Real-time computation of optimal control is a challenging problem and, to solve this difficulty, many frameworks proposed to use learning techniques to learn (possibly sub-optimal) controllers and enable their usage in an online fashion. Among these techniques, the optimal motion framework is a simple, yet powerful technique, that obtained success in many complex real-world applications. The main idea of this approach is to take advantage of dynamic motion primitives, a widely used tool in robotics to learn trajectories from demonstrations. While usually these demonstrations come from humans, the optimal motion framework is based on demonstration coming from optimal solutions, such as the ones obtained by numeric solvers. As usual in many learning techniques, a drawback of this approach is that it is hard to estimate the suboptimality of learned solutions, since finding easily computable and non-trivial upper bounds to the error between an optimal solution and a learned solution, is, in general, unfeasible. However, we show in this paper that it is possible to estimate this error for a broad class of problems. Furthermore, we apply this estimation technique to achieve a novel and more efficient sampling scheme to be used within the optimal motion framework, enabling the usage of this framework in some scenarios where the computational resources are limited.

@inIFAC-PapersOnLine},

title={Learning optimal controllers: a dynamical motion primitive approach},

author={Hugo T.M. Kussaba, Abdalla Swikir, Fan Wu, Anastasija Demerdjieva, Gitta Kutyniok, Sami Haddadin},

booktitle={IFAC-PapersOnLine},

volume={56},

issue={2},

pages={4776-4782},

year={2023}

}